Crafting Our AI Assistant

Unleashing the Magic of AI: Crafting a Custom, ChatGPT-powered Voice Assistant with MagicMirror², PicoVoice (Porcupine), Whisper, and Mimic3.

Introduction - The Genesis of Our AI-Powered Voice Assistant

Both my wife and I find ourselves relying on ChatGPT on a daily basis, leveraging its capabilities for a broad spectrum of tasks. Whether it's SEO, content editing, code-writing, discovering various tools and libraries, or analyzing market dynamics and sales strategies, we're continually amazed by the transformative impact of Language Learning Model (LLM) technologies like ChatGPT on our productivity.

Driven by her passion for Product Management, my wife was intrigued by the idea of building something innovative around AI, with the dual objectives of understanding the strengths and weaknesses of such products. This passion not only led to our projects but also drove her to offer her expertise in product management as a consultant. She's now available for consultations, and you can schedule a session with her via this Calendly link.

We eventually settled on two projects: a quick, straightforward one, and a more complex, long-term endeavor (stay tuned for the big reveal!). The quick and easy project was destined to be a personal use tool, leading us to the idea of creating a Personal Voice Assistant.

We already owned a Google Home Mini and had become quite familiar with interacting with our Google Assistant. However, our experiences with it had been less than stellar recently - it either struggled with transcribing our requests accurately or failed to provide satisfactory answers, and in some cases, any answers at all. It was clear that a more reliable alternative was in order, and that's when I started exploring the idea further.

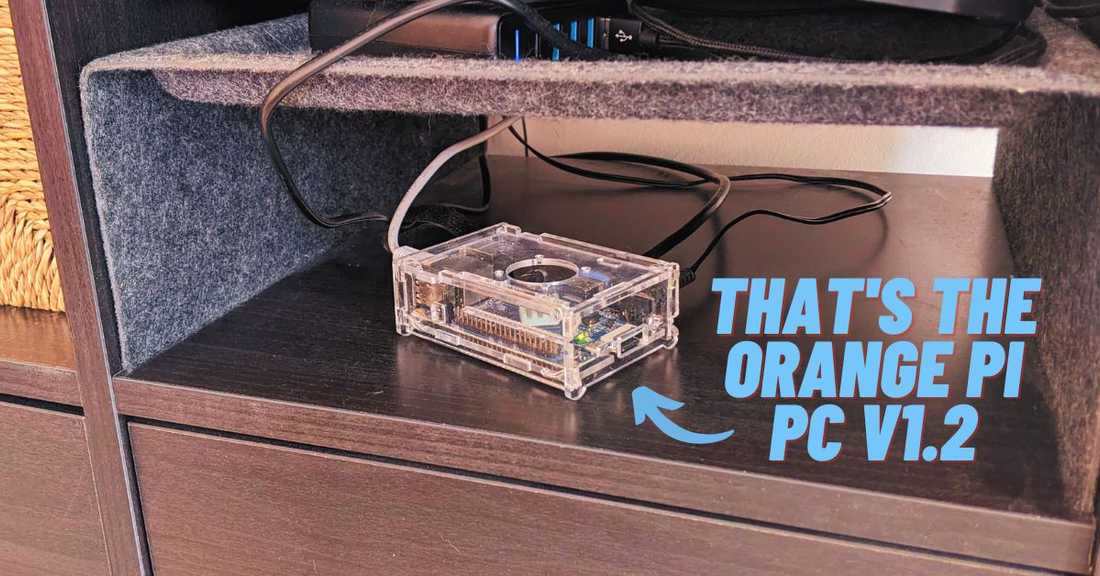

I remembered that I had an old OrangePI PC V1.2, purchased from AliExpress about five years ago for a mere $10, gathering dust. Also, our TV had an extra HDMI port (one was already in use by Chromecast). This led me to an exciting idea: why not create an 'idle' display for our TV, serving as an alternative to Chromecast, and integrate an AI Assistant into it?

As a web developer, I initially found this task daunting, as it was outside of my usual domain. But, bolstered by the possibilities enabled by ChatGPT, I was convinced that no challenge was insurmountable. Juggling this project alongside my full-time job, it took us approximately a week to bring our idea to fruition. And now, let's dive into the results:

If you’d like to skip my blog article and just install this on your device (assuming you’re familiar with MagicMirror²) - here’s the link to the module: https://github.com/Nikro/MMM-WhisperGPT

Navigating the Sea of Options

Suddenly, it hit me - MagicMirror²! I vaguely remembered its existence, and a quick chat with ChatGPT confirmed that it was indeed a viable option. I dove into the deep end, exploring every possibility that MagicMirror² offered. What really caught my eye was the thriving community of dedicated builders working on it. I mean, just take a look at the sheer number of community-contributed modules here - MagicMirror² Community Modules.

There's a ton of cool modules you can play with: weather and calendar are part of the core, but the possibilities extend way beyond. Want to add maps or your Google Fit data to give you that extra push for your morning run? No problem. Feel like having various financial widgets or news widgets? They've got you covered.

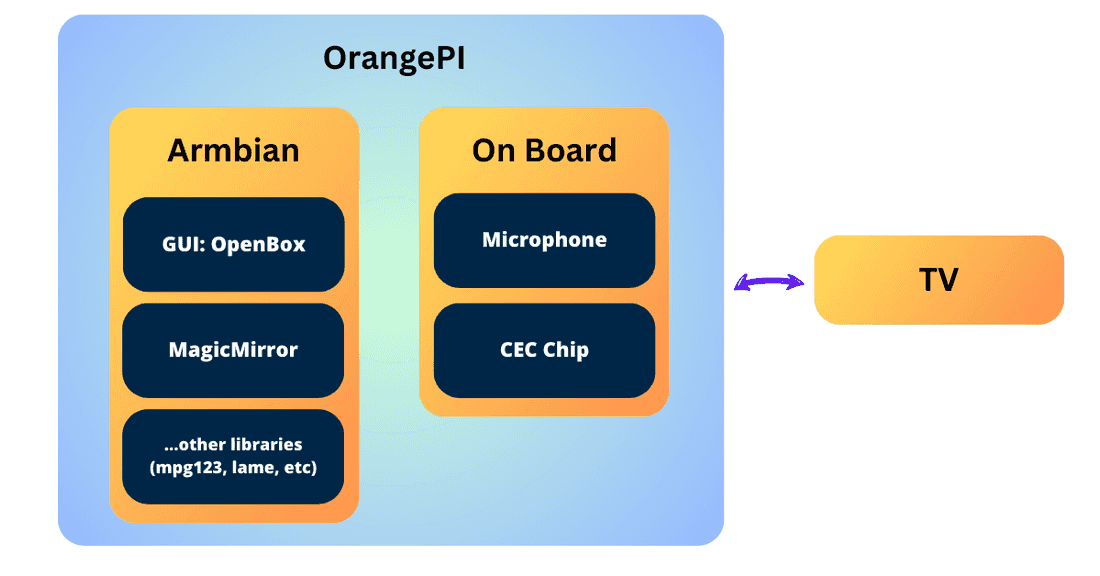

At that point, my OrangePI was running Armbian, without any GUI. So, I decided to install a super-light GUI on top of X11 (OpenBox was the winner, all thanks to good ol' ChatGPT). MagicMirror runs on NodeJS and doubles as an Electron app, so there's no need for Chromium (which needs Snap) or any other browser.

After that, it was all about tweaking the little details, like enabling auto-login for my user, and setting up OpenBox to automatically run MagicMirror.

I also slapped on a "Loading..." screen as the background for my OpenBox to keep things interesting while MagicMirror was getting its act together. I tried to set up plymouth (and an alternative to it) so that I could have it while the OrangePi was booting, but that didn't pan out as I'd hoped. 😢

Diving Into MagicMirror² Module Development

Another big thumbs-up for MagicMirror² was the super active community. It's like a treasure trove of examples that you can learn from. Plus, they've got this template repo right here: MagicMirror² Module Template.

This handy tool lets you kick-start your custom module in no time. With a little help from my buddy, ChatGPT, I had the initial draft up and running within the same day. Granted, I still had to do some reading 📚 and have a friendly chat with our AI Overlord (😉) to get the hang of how MagicMirror modules work. But, I gotta say, the pace of progress was pretty darn exhilarating 🚀.

Here's what I needed to get started:

How It Works

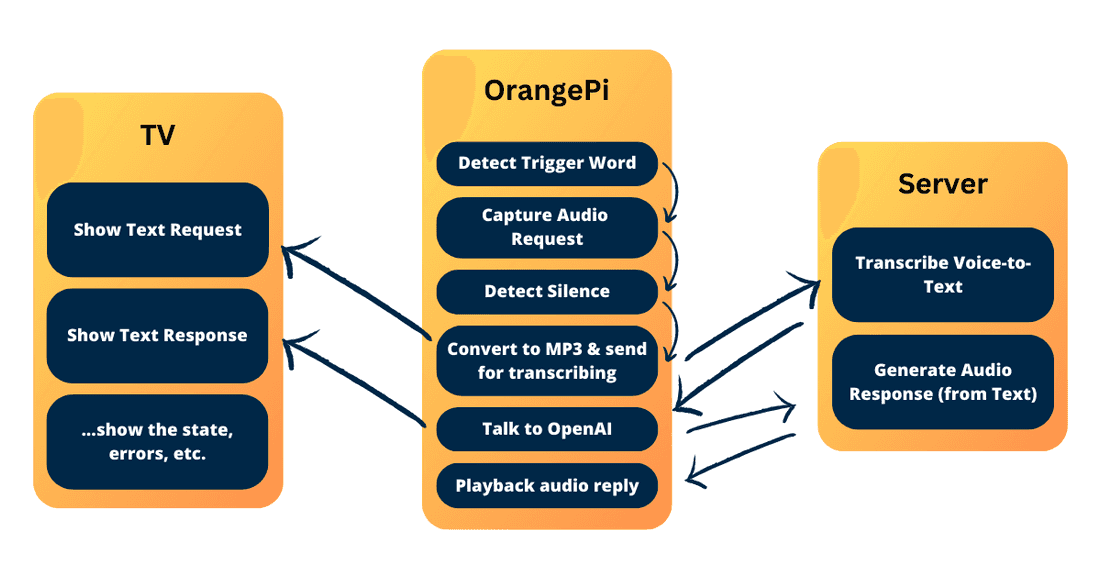

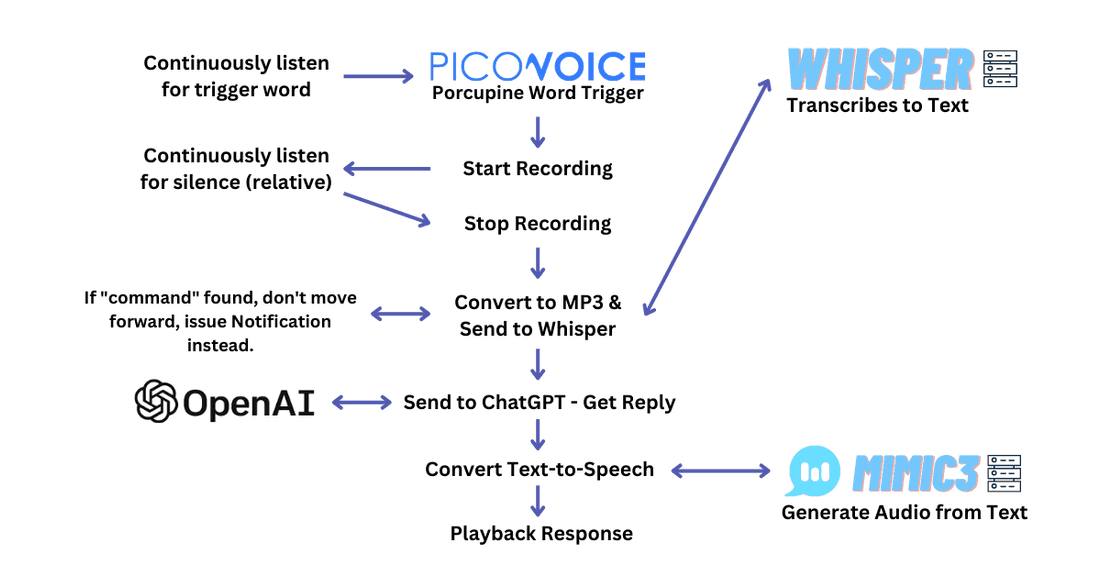

To bring our grand plan to life, I picked up a few tools along the way:

- For the trigger-word (wake word), I went with PicoVoice / Porcupine. This tool is free and self-hosted, although you still need to register and use the API key (it only reports general usage).

- For speech-to-text - I used Whisper, from OpenAI. However, I didn't use their raw source-code. Instead, I opted for this dockerized web-api built around it, which also offers a faster-whisper method.

- For the AI (the brains of the operation), I relied on the ChatGPT API, through LangChain. LangChain is a pretty cool library that offers a bunch of handy features right out of the box, such as memory.

- For text-to-speech, I employed Mimic3. My initial plan was to compile and run it on my OrangePI, but I ran into a heap of problems with the Arm32v7 CPU, ONNXRuntime, and Mimic3 itself. So, I decided to host it on a remote server (just like Whisper).

Here's how these components come together:

Now, a few notes about each piece of the puzzle:

- PicoVoice / Porcupine - Make sure to adjust the trigger-word and sensitivity appropriately. If it's too sensitive, it could accidentally trigger and not only would this be annoying, but it would also chew through your tokens. You can also train your own word, once registered, they offer a UI to do so.

- Whisper & Mimic3 - I installed both of these services on my PC (available via local network). The module assumes you're using the same web-api wrapper around Whisper. If you want to use WhisperCPP or any other transcribed version, you'll need to fork and adjust accordingly. The same goes for Mimic3 - I'm using their default Mimic3-Server, but if you want to switch to something else (like Nix-TTS), you'll have to fork and tweak it accordingly.

- AI Memory - Right now, it's a simple memory system (last ~2k tokens, give or take), but I'm planning to upgrade it to something more permanent (and save on token usage) in the future.

- Commands - If you want to create custom commands, like "turn off the TV", you'll have to write your own module. Here's one I made: mmm-whispergpt-extra.

- Re-requesting - If you yell "Jarvis" loud enough, it should stop the AI from talking so you can submit a new request. 😂

You can tweak the integration to your heart's content. The README.md explains all the custom parameters you can set. One obvious parameter would be switching the language.

For instance, if you use the model "base" (not "base.en"), then you can use any language that Whisper supports. Then, you'll need to adjust the system message (probably in the required language) and the whisperLanguage. Lastly, you'd pick a voice for Mimic3 from this page: Mimic3 Voices.

Once you've tweaked these settings, restart your Openbox (or just Magic Mirror) and test it out. Here's an example in Spanish.

---

How To Set Up Your Own Assistant at Home

Here’s a step-by-step guide to help you set up your own home assistant. However, remember that you might encounter various issues that you'll need to troubleshoot yourself (ChatGPT might be your best friend here 😉).

Step 1. Pick your device, set up the OS.

In my case, it was an OrangePI PC v1.2 and Armbian, but your journey might be different. Just ensure you've got a lightweight window manager and that your device can handle MagicMirror.

Step 2. Install Magic Mirror.

Follow the installation steps and test if your device can run MagicMirror smoothly.

Step 3. Test Audio / Microphone.

Here's how you can do that:

- To test audio output, you can use the aplay command. Try running aplay /usr/share/sounds/alsa/Front_Center.wav. If your speakers are working correctly, you should hear a voice saying "Front Center".

- To test your microphone, you can use the arecord command. Try arecord -d 10 test.wav, which will record a 10-second audio clip. You can then play it back using aplay test.wav.

- If you need to adjust volumes for your input/output devices, you can use alsamixer. Just type alsamixer in your terminal and use the arrow keys to adjust the volume. Make sure to select the correct sound card using F6.

Step 4. Configure Magic Mirror to your liking

This is where you get to configure the default MagicMirror modules. Feel free to clone and add any other modules you might find useful.

Step 5. Add MMM-WhisperGPT.

Clone the MMM-WhisperGPT module and add the correct properties as specified in the README.md file.

Step 6. Make sure you have a laptop or PC that can run Whisper and Mimic3.

You'll need a machine that can run Whisper and Mimic3. You can use this Whisper Web Service for your Whisper needs. I opted for the GPU version and managed everything through Docker-Compose.

Use this for your Mimic3 - that’s my docker-compose.yml:

Maybe you’d like to add systemd entries - so when you start your laptop/PC it automatically starts these 2 services.

Step 7. Get OpenAI ChatGPT key

In my experience, the free credit provided by OpenAI didn't work for me. It only started working after I added a credit card and they charged me $5. Once you have the key, paste it into your config.js file in MagicMirror.

Step 8. Final touches

If you’re using OpenBox, configure ~/.config/openbox/autostart:

Note that I used feh to set up the background "Loading" image. I also redirect all outputs into mm.log to facilitate debugging if something goes wrong. If you want to run in development mode and log everything, you can use npm start dev >> /home/nikro/mm-dev.log 2>&1.

Expanding the Module with Your Own Custom Commands

You have the freedom to extend this module with your own custom commands. Here's how commands currently work: if you say, "Jarvis, command: turn off the TV", and the request string contains "command", it won't be sent to ChatGPT. Instead, it will broadcast an event to all the other modules.

You can use this functionality to implement some regex expressions and execute some predetermined commands. For more information, check out this link: https://github.com/Nikro/mmm-whispergpt-extra

I have an OrangePI that comes with a specific CEC chip that allows it to control the TV via HDMI. Here's what this module does for me:

- "Jarvis, command: Chromecast" will switch the source to Chromecast (HDMI 1).

- "Jarvis, command: Turn off/on the TV" is self-explanatory.

- "Jarvis, {any request}" will switch the source to OrangePI, taking focus away from Chromecast.

These might seem like simple commands, but they're incredibly handy. Maybe you'd also like to adjust the volume via voice, or install VLC and play certain YouTube videos. The sky's the limit with what you can do!

Wrapping Up

This personal project was a fun and insightful journey. It gave me the opportunity to tinker with LangChain (although in a limited way, for now), play with Whisper, and explore how the Text-to-Speech landscape has evolved with the emergence of large language models and transformer models.

The more we interacted with our AI Assistant, the more gaps we noticed. Some we've already fixed, while others we'll address over time. One amusing instance was when Jarvis introduced itself by saying "Jarvis," unintentionally triggering an infinite loop 🤣. We rectified this by ensuring that the state resets and audio cuts off as soon as the trigger word is received. Another issue we're still working on is the implementation of a self-hosted, tiny AI that could classify requests into three categories: legitimate requests, commands, and accidental triggers. This would allow us to do away with the word "command" and save some tokens in the process.

The good news is you don't have to be a data scientist or an AI/ML developer to create impressive projects with these tools. You can use existing APIs or web service wrappers to run them and build projects around them. LangChain, in particular, is a fantastic source of inspiration. I strongly encourage everyone to explore this project, which is now available in two versions: Python and JavaScript.

On a side note, I'm thrilled to share that I'm offering consultancy services for AI integrations and product development. If you're looking to bring the power of AI into your products or services but are unsure of where to start, I'd be happy to help. I bring a hands-on understanding of the tools and a passion for creating practical, user-friendly AI solutions.

Do you have any projects involving OpenAI or any other large language model tool? I'd love to hear about them in the comments!

Thanks for joining us on this journey!

💡 Inspired by this article?

If you found this article helpful and want to discuss your specific needs, I'd love to help! Whether you need personal guidance or are looking for professional services for your business, I'm here to assist.

Comments:

Feel free to ask any question / or share any suggestion!